Microsoft Research demos smart interactive displays

Microsoft Research has put out no shortage of impressive tech demos, some which evolve into consumer products like the Kinect, and others either geared towards institutional use such as the Surface, which in some cases trickles down to consumer tech. In a recent video, Director of Microsoft Applied Sciences Steven Bathiche demonstrates some of their latest research into smart interactive displays, from “capturing light from the user to sending light to the users eyes”. Consider it a sort of mashup of Kinect and Surface, with the ability to display different information to multiple users, as well as autostereoscopic images (glasses free 3D).

The two elements that are really interesting are the ‘wedge’ display, which uses a Kinect to track the location of two people, and then redirect light to them, so they each see a different image on the same monitor, which opens a number of possibilites. It could also drastically cut down on power consumption for portable devices, as the light can be directed to the the user, while providing some screen privacy while out in public. The tracking can also send two separate images to a users eyes, which gives a glasses free 3D effect.

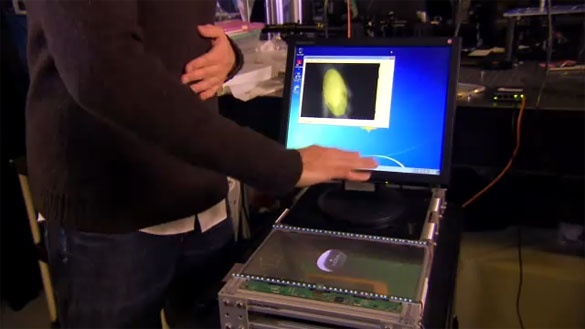

Also impressive was spatial recognition built into OLED montiors. The basic idea is that since there are ‘gaps’ between the pixels in OLED screens, they can use depth sensing to allow manipulation of on screen elements without touching the screen. The depth sensors can decect depths of up to approximately a metre away from the screen. When all rolled together it adds up to some of the most impressive Natural User Interface demos in a long time.

619497 355855I want looking at and I believe this web site got some really helpful stuff on it! . 315485